A Promessa e o Perigo da Nova Revolução Digital

A nova corrida tecnológica global levanta uma pergunta urgente: estamos prontos para confiar na inteligência que criamos?

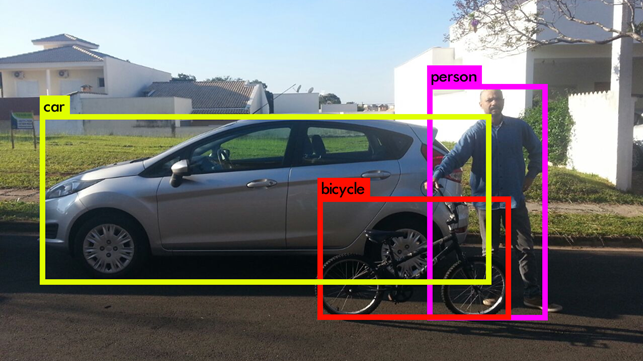

Gosto de introduzir a tecnologia de Visão Computacional como a capacidade de dar visão para as máquinas no espaço ao seu redor. É fazer um equipamento ser capaz de reconhecer, identificar e extrair dados de vídeos ou imagens.

No passado esta tarefa exigia fortes conhecimentos matemáticos e de programação. Um trabalho extenso, que partia da captura, qualidade e elementos da imagem, cores, luz e o entendimento de como cada um destes elementos impactam a função da visão. Fazer a máquina enxergar com toda a eficiência com que o cérebro humano o faz através de nossos olhos.

Atualmente existem diversas bibliotecas de programação para o desenvolvimento de software e soluções neste segmento. E o que quero compartilhar neste artigo é o mais novo software que vem revolucionando a chamada Visão Computacional, a versão 4 do YOLO.

Caso você ainda não ouviu falar sobre Yolo, devo explicar que é uma tecnologia de Visão Computacional que vem obtendo muito destaque no setor de detecção de objetos. O software é livre e toda sua arquitetura e código fonte estão disponíveis para todos na internet.

Sua técnica lançada em 2015 por Joseph Redmon e Ali Farhadi foi muito inovadora pelo fato de processar com precisão similar ou superior comparados aos seus concorrentes, mas com uma performance de tempo muito performática em tempo real (em torno de 30 fps – frames per second). Muitas pesquisas relataram desempenho de tempo 10 vezes comparados aos métodos mais precisos na época de seu lançamento. Inclusive em 2017 participei dos testes com super computadores da época envolvendo IBM e NVIDIA ( https://exame.com/tecnologia/brasileiro-ajuda-ibm-e-nvidia-a-dar-olhos-para-a-computacao/ ).

A versão Yolo 4 em abril de 2020 foi considerada o estado da arte. Pois é uma tecnologia que apresenta maior precisão ao processar classificação e localização de objetos em tempo real. O vídeo a seguir demonstra o resultado da versão atual, como também a respectiva evolução da tecnologia Yolo:

The evolution of Yolo - v2,v3 and v4 - YouTube

Como podemos concluir no vídeo acima, se um determinado pedestre estivesse vestindo uma camiseta com a estampa utilizada como prova de conceito nos testes laboratoriais, um veículo autônomo poderia atropelar um humano se processasse os objetos nas versões anteriores (caso os veículos não possuíssem tecnologias complementares como sensores).

A tecnologia YOLO foi revolucionária e ganhou notoriedade em uma apresentação na TED Talks, onde Redmon demonstra em tempo real a primeira versão. Nesta demonstração, o autor convenceu o mundo da eficiência ao processar em uma GPU a classificação e localização de até 80 categorias de objetos com uma taxa aproximada de 30 fps.

https://www.youtube.com/watch?v=Cgxsv1riJhI

O YOLO não é uma rede, e sim um método de detecção. O seu principal diferencial frente a outros métodos (como Haar Cascade ou HOG), é o conceito onde com apenas uma única varredura, é possível detectar o objeto e localizar a região pertencente ao mesmo. Este motivo foi a atribuição do nome (You Only Look Once) “Você olha apenas uma vez”.

Em outras palavras as predições da classe ocorrem em uma única passada na rede. Outros métodos eram demorados até então, pois executavam a detecção dividindo a imagem em diversas regiões, estas áreas eram submetidas para um classificador um a um até milhares de vezes sobre a mesma figura (técnica conhecida como sliding window).

Sua implementação foi escrita na linguagem C, o projeto foi batizado como Darknet. É totalmente opensource e tem suporte para GPUs. O projeto está disponível em https://github.com/AlexeyAB/darknet

A versão 4 foi disponibilizada por autores diferentes. Pois em fevereiro, um dos autores originais mencionou parar suas pesquisas com visão computacional em função do impacto na sociedade e uso da sua tecnologia no mercado ( https://twitter.com/pjreddie/status/1230524770350817280 ).

O Yolo 4 foi publicada por Alexey Bochkovskiy, Chien-Yao Wang e Hong-Yuan Mark Liao. As principais vantagens são ganhos de performance/velocidade de inferência e assertividade/acurácia. Outra vantagem desta versão, foi aplicar técnicas mais eficazes para rodar o processamento em GPUs, baseado na otimização e utilização com menor uso de memória. Resumidamente: mais rápido e mais preciso comparado ao EfficientDet, RetinaNet/MaskRCNN com o dataset COCO.

Esta tecnologia vem ganhando espaço. O mercado, cientistas e pesquisadores vêm utilizando a tecnologia em todo mundo em diversos segmentos como robótica, medicina e agronegócios. O YOLO é utilizado como detenção de objeto em diversas plataformas e segmento, como aplicativos em celulares, carros autônomos e outros.

Incrível, não é mesmo? Se você quiser se aprofundar no tema, venha fazer o curso de Fundamentos de Visão Computacional comigo e aprender como inovar com estas tecnologias incríveis: https://www.i2ai.org/course/19/detail/

YOLOv4 Paper – https://arxiv.org/abs/2004.10934

YOLOv4 Real-Time Object Detection – https://github.com/AlexeyAB/darknet

YOLOv4 on OpenCV – https://docs.opencv.org/master/da/d9d/tutorial_dnn_yolo.html

Sócio cofundador da empresa OITI TECHNOLOGIES, Pesquisador cujo primeiro contato com tecnologia foi em 1983 com 11 anos de idade. Leva o Linux a sério, pesquisa e trabalhos com biometria e visão computacional desde 1998. Experiência com biometria facial desde 2003, redes neurais artificiais e neurotecnologia desde 2009. Inventor da tecnologia CERTIFACE, mais de 100 palestras ministradas, 14 artigos impressos publicados, mais de 8 milhões de acessos nos 120 artigos publicados, Docente da FIA, Membro oficial Mozillians, Membro oficial e Embaixador OpenSUSE Linux América Latina, Membro do Conselho OWASP SP, Contribuidor da biblioteca OpenCV e Global Oficial OneAPI Innovator Intel, Membro Notável I2AI, Fundador da iniciativa Global openSUSE Linux INNOVATOR e Mentor Cybersecuritygirls BR

A nova corrida tecnológica global levanta uma pergunta urgente: estamos prontos para confiar na inteligência que criamos?

Por que dominar a IA será a nova alfabetização do século XXI

Conselhos de Administração devem evoluir da supervisão reativa para a antecipação estratégica, frente à crescente complexidade e volatilidade dos ambientes de negócios.

De 14 a 25 de julho, reserve suas manhãs das 08h00 às 09h30 para participar da tradicional Maratona I2AI! Uma jornada intensa com debates e palestras sobre temas essenciais: Ética,